shallow neural network

2018, Sep 22

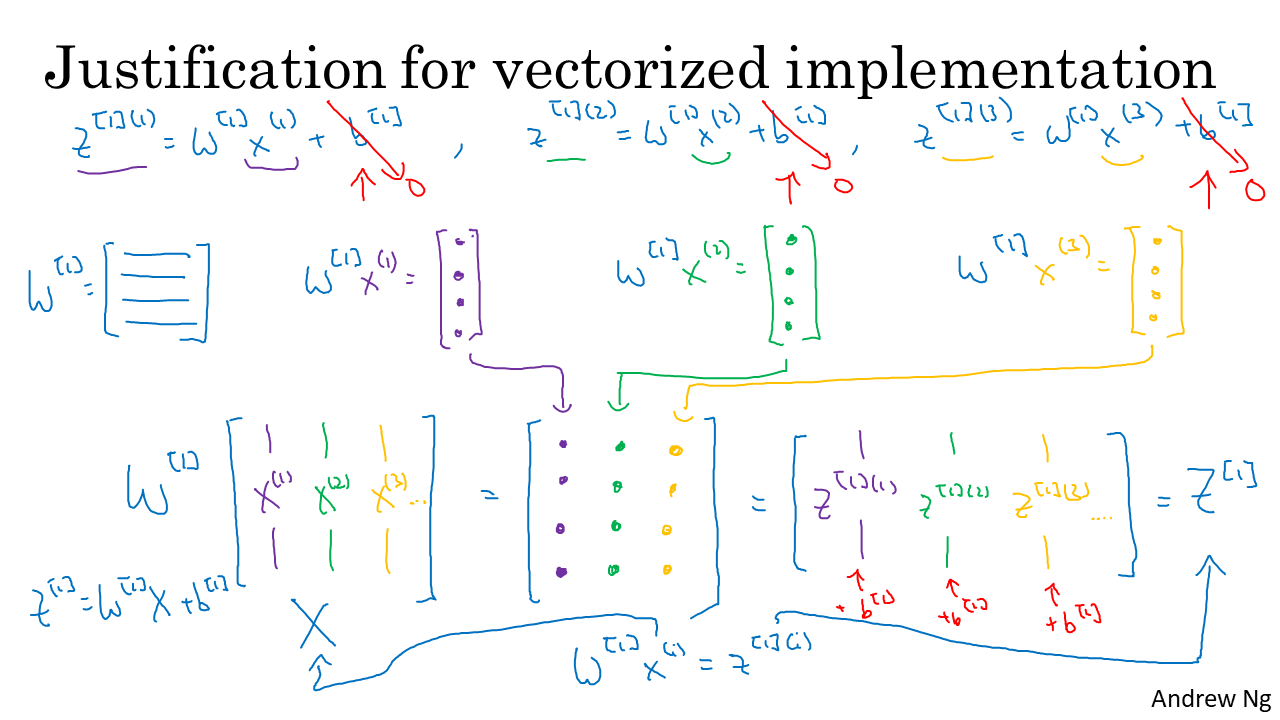

Explanation for vectorized implementation

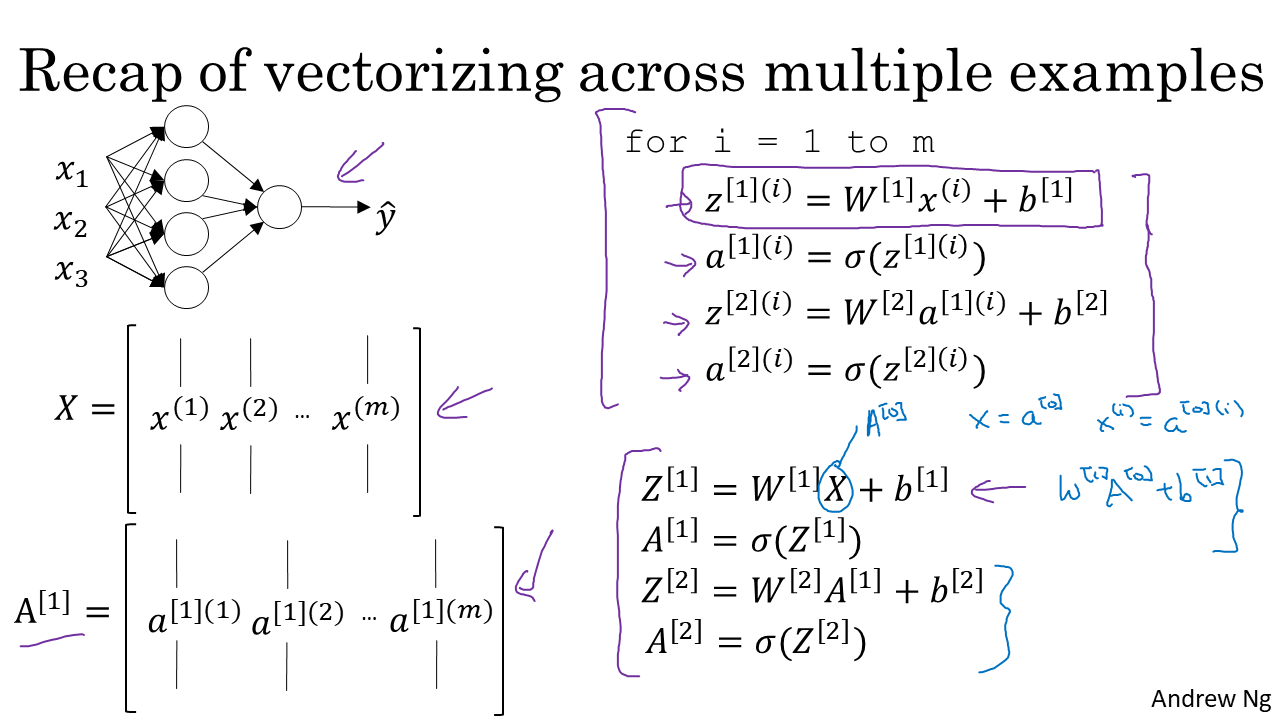

In this lecture, we study vectorized implementation. It’s simple. with vetorization, we don’t need to use for-loop for all data.

we just define Data X, Weight W, (+ bias) and do the math with Numpy.

To understand the below, recap the notation.

\(a^{[1](i)}\) : activation result of 1st layer and i-th element(node).